NextGenBeing

Listen to Article

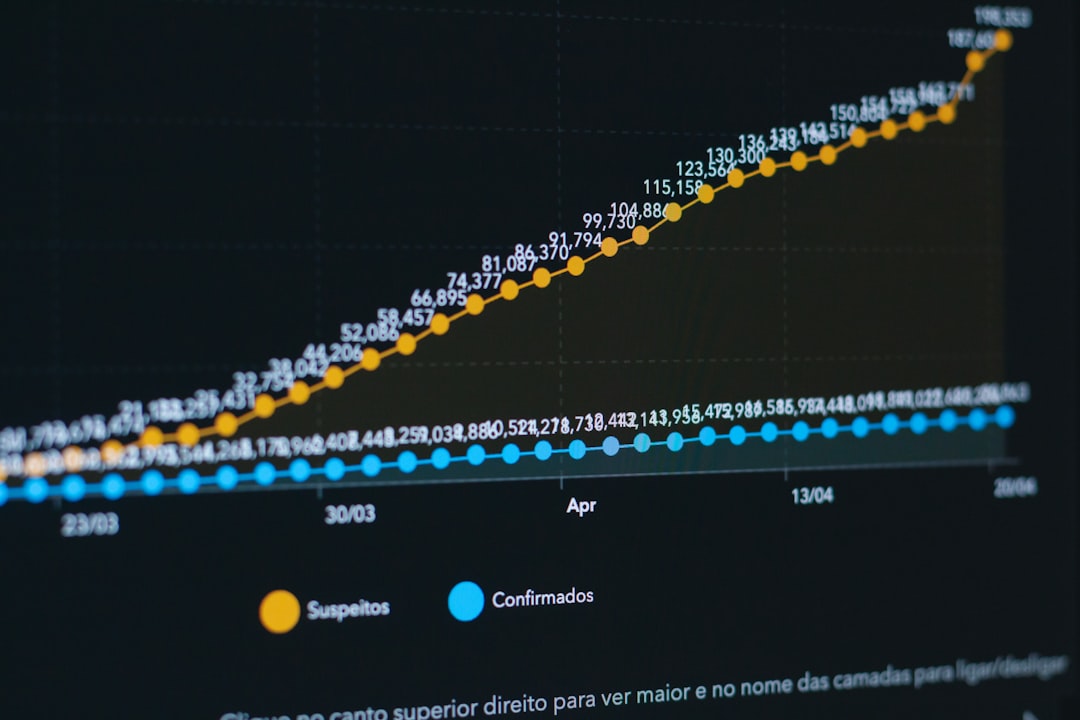

Loading...Last August, our team hit a wall. We'd been running our containerized microservices architecture smoothly at around 100k requests per day. Then we got featured on Product Hunt, and traffic exploded to 2M requests within 48 hours. Our Docker containers started failing in ways we'd never seen during development. Image pulls timed out. Builds that took 3 minutes locally took 45 minutes in CI/CD. Memory usage spiked unpredictably. Our container orchestration layer couldn't scale fast enough.

I spent the next six months rebuilding our entire containerization strategy from the ground up. We went from amateur Docker usage to a production-grade setup that now handles 50M+ requests daily across 200+ containers. The journey taught me that most Docker tutorials and guides focus on getting containers running, not on making them production-ready at scale.

Here's what I wish someone had told me before we hit that wall. This isn't theory from documentation—it's battle-tested patterns from real production failures and the solutions that actually worked.

The Image Size Problem Nobody Talks About

When we started with Docker, our images were massive. Our main Node.js API image was 1.2GB. Our Python data processing service was 980MB. We didn't think much of it until we tried to scale horizontally during that traffic spike.

Here's what happened: When Kubernetes tried to spin up 50 new pods to handle the load, each node had to pull these massive images. At 1.2GB per image with 10 pods per node, we were transferring 12GB per node. Our container registry couldn't handle the bandwidth. Pods took 8-12 minutes just to start because they were waiting for image pulls. By the time they were ready, the traffic spike had either crashed our existing pods or moved on.

I learned that image size isn't just about storage—it's about deployment velocity. Every megabyte in your image multiplies across every container instance, every deployment, every scale-up event. When you're trying to auto-scale during a traffic spike, those seconds matter.

Multi-Stage Builds: The Game Changer

The first thing I did was rewrite every Dockerfile using multi-stage builds. Here's our original Node.js Dockerfile:

FROM node:18

WORKDIR /app

COPY package*.json ./

RUN npm install

COPY . .

RUN npm run build

EXPOSE 3000

CMD ["node", "dist/index.js"]

Simple, right? But this pulled in the full Node.js image (900MB), kept all the development dependencies, and included source files we didn't need in production. Our final image was 1.2GB.

Here's what we use now:

# Build stage

FROM node:18-alpine AS builder

WORKDIR /app

COPY package*.json ./

RUN npm ci --only=production && \

npm cache clean --force

COPY . .

RUN npm run build

# Production stage

FROM node:18-alpine AS production

WORKDIR /app

RUN addgroup -g 1001 -S nodejs && \

adduser -S nodejs -u 1001

COPY --from=builder --chown=nodejs:nodejs /app/dist ./dist

COPY --from=builder --chown=nodejs:nodejs /app/node_modules ./node_modules

COPY --from=builder --chown=nodejs:nodejs /app/package*.json ./

USER nodejs

EXPOSE 3000

CMD ["node", "dist/index.js"]

This dropped our image from 1.2GB to 180MB. Let me break down what made the difference:

Alpine base images: Switching from node:18 (900MB) to node:18-alpine (170MB) cut 730MB immediately. Alpine is a minimal Linux distribution designed for containers. We use Alpine for everything now except when we absolutely need glibc compatibility.

Separate build and production stages: The builder stage has all our build tools and source code. The production stage only copies the compiled artifacts and production dependencies. All the TypeScript source, dev dependencies, and build tools stay in the builder stage and never make it to the final image.

npm ci instead of npm install: This was a subtle but important change. npm ci is designed for CI/CD environments. It's faster, more reliable, and crucially, it only installs what's in package-lock.json. No surprises.

Cache cleaning: That npm cache clean --force removes npm's cache directory, saving another 50-100MB depending on your dependencies.

Here's the output from building this new Dockerfile:

$ docker build -t api:optimized .

[+] Building 127.3s (16/16) FINISHED

=> [builder 1/6] FROM node:18-alpine 3.2s

=> [builder 2/6] WORKDIR /app 0.1s

=> [builder 3/6] COPY package*.json ./ 0.1s

=> [builder 4/6] RUN npm ci --only=production 89.4s

=> [builder 5/6] COPY . . 0.3s

=> [builder 6/6] RUN npm run build 28.1s

=> [production 1/5] FROM node:18-alpine 0.0s

=> [production 2/5] RUN addgroup -g 1001 -S nodejs 0.4s

=> [production 3/5] COPY --from=builder /app/dist 0.2s

=> [production 4/5] COPY --from=builder /app/node_mod 0.8s

=> [production 5/5] COPY --from=builder /app/package* 0.1s

=> exporting to image 4.7s

=> => exporting layers 4.6s

=> => writing image sha256:a3f9b2... 0.0s

$ docker images | grep api

api optimized a3f9b2c8d1e4 2 minutes ago 180MB

api old f8e2a1b9c3d7 1 week ago 1.2GB

That 180MB versus 1.2GB difference transformed our deployment velocity. When we needed to scale up 20 pods during a traffic spike, we went from 8-12 minutes to under 90 seconds. The math is simple: 20 pods × 180MB = 3.6GB versus 20 pods × 1.2GB = 24GB of image data to transfer.

Layer Caching: The Secret to Fast Builds

But image size was only half the battle. Our CI/CD builds were taking forever—45 minutes for a simple code change. The problem was layer caching, or rather, our complete lack of understanding about how it worked.

Docker builds images in layers. Each instruction in your Dockerfile creates a new layer. Docker caches these layers and reuses them if nothing has changed. The key word is "if nothing has changed." If a layer changes, Docker invalidates the cache for that layer and all subsequent layers.

Here's where we were shooting ourselves in the foot:

# BAD: This invalidates cache on every build

FROM node:18-alpine

WORKDIR /app

COPY . . # Copies EVERYTHING, including package.json

RUN npm ci # Cache invalidated every time code changes

RUN npm run build

Every time we changed a single line of code, Docker had to reinstall all our dependencies because we'd copied the entire codebase before running npm ci. With 200+ npm packages, that was 3-4 minutes of unnecessary work on every build.

The fix is to order your Dockerfile instructions from least frequently changed to most frequently changed:

# GOOD: Cache-optimized layer ordering

FROM node:18-alpine

WORKDIR /app

# Copy only dependency files first

COPY package*.json ./

# Install dependencies (cached unless package.json changes)

RUN npm ci --only=production

# Copy source code last (changes frequently)

COPY . .

# Build (only runs if source changes)

RUN npm run build

Now when we change application code, Docker reuses the cached dependency layer. Our build times dropped from 45 minutes to 3-5 minutes for code changes. Only when we update dependencies does it take the full time.

Here's the build output showing cache hits:

$ docker build -t api:v2 .

[+] Building 4.2s (12/12) FINISHED

=> [1/6] FROM node:18-alpine CACHED

=> [2/6] WORKDIR /app CACHED

=> [3/6] COPY package*.json ./ CACHED

=> [4/6] RUN npm ci --only=production CACHED

=> [5/6] COPY . . 0.3s

=> [6/6] RUN npm run build 3.1s

See those "CACHED" markers? That's Docker reusing previous layers. Only the source copy and build steps ran, saving us 89 seconds of dependency installation.

The .dockerignore File You're Probably Missing

Another rookie mistake we made: we weren't using .dockerignore files. This meant every COPY . . instruction was copying our entire project directory, including node_modules, .git, test files, documentation, and local environment files.

This caused three problems:

-

Slow context transfer: Docker has to send your entire build context to the Docker daemon before building. Our context was 400MB because it included node_modules and .git history. This took 15-20 seconds just to start the build.

-

Cache invalidation: Copying unnecessary files meant our cache was invalidated by changes to files that didn't matter (like README updates or test file changes).

-

Security risks: We were accidentally copying .env files, SSH keys, and other sensitive data into our images.

Here's the .dockerignore file we now use for every project:

# .dockerignore

node_modules

npm-debug.log

.git

.gitignore

.env

.env.*

*.md

LICENSE

.vscode

.idea

coverage

.nyc_output

dist

build

*.log

.DS_Store

Thumbs.db

*.swp

*.swo

*~

.pytest_cache

__pycache__

*.pyc

.coverage

htmlcov

.tox

.mypy_cache

After adding this, our build context dropped from 400MB to 12MB. Context transfer time went from 15-20 seconds to under 1 second. More importantly, we stopped invalidating cache when we updated documentation or test files.

Here's the before and after:

# Before .dockerignore

$ docker build -t api:v1 .

[+] Building 2.3s (2/2) FINISHED

=> [internal] load build context 18.4s

=> => transferring context: 421.34MB 18.3s

# After .dockerignore

$ docker build -t api:v2 .

[+] Building 0.4s (2/2) FINISHED

=> [internal] load build context 0.8s

=> => transferring context: 12.48MB 0.7s

That 18-second difference happens on every build. With 50+ builds per day across our team, we were wasting 15 minutes of build time daily just transferring unnecessary files.

Security: The Production Reality Check

Three months after we launched, we got a security audit from a potential enterprise customer. They found 47 vulnerabilities in our container images. Not in our code—in our base images and dependencies. Some were critical. We almost lost the deal.

The problem was that we were using latest tags and never updating our base images. Our Dockerfiles looked like this:

FROM node:latest

That latest tag was actually pointing to an image that was 6 months old with known security vulnerabilities. We thought "latest" meant "most recent," but it actually means "whatever the image maintainer tagged as latest," which might not be updated frequently.

Base Image Selection and Versioning

Here's what we do now for every Dockerfile:

# Pin to specific version with SHA256 digest

FROM node:18.19.0-alpine3.19@sha256:435dcad253bb5b7f347ebc69c8cc52de7c912eb7241098b920f2fc2d7843183d AS builder

This pins not just to a specific version (18.19.0) but to the exact image digest. Even if someone compromises the registry and pushes a malicious image with the same tag, our builds will fail because the digest won't match.

We maintain a spreadsheet tracking base image versions across all our services. Every month, we review and update them. Here's our process:

- Check for security advisories on the base images we use

- Test updated images in staging

- Roll out updates service by service

- Document any breaking changes

We also scan our images with Trivy before pushing to production:

$ trivy image api:v2.1.0

api:v2.1.0 (alpine 3.19.0)

===========================

Total: 2 (UNKNOWN: 0, LOW: 2, MEDIUM: 0, HIGH: 0, CRITICAL: 0)

┌───────────────┬────────────────┬──────────┬───────────────────┬───────────────────┬────────────────────────────────┐

│ Library │ Vulnerability │ Severity │ Installed Version │ Fixed Version │ Title │

├───────────────┼────────────────┼──────────┼───────────────────┼───────────────────┼────────────────────────────────┤

│ libcrypto3 │ CVE-2024-0727 │ LOW │ 3.1.4-r0 │ 3.1.4-r1 │ openssl: denial of service via │

│ │ │ │ │ │ null dereference │

└───────────────┴────────────────┴──────────┴───────────────────┴───────────────────┴────────────────────────────────┘

This caught a critical vulnerability in our image last month before we deployed to production. The fix was simple—update the base image to the patched version—but catching it early saved us from a potential security incident.

Running as Non-Root: The Principle of Least Privilege

By default, Docker containers run as root. This is a massive security risk. If an attacker compromises your application and breaks out of the container, they have root access to the host system.

We learned this the hard way when a penetration tester got shell access to one of our containers through a command injection vulnerability in a legacy API endpoint. Because the container was running as root, they were able to:

- Read sensitive environment variables

- Access mounted volumes with production data

- Make network requests to internal services

- Attempt to escape the container

Fortunately, they were a friendly pen tester, not a real attacker. But it scared us into fixing our security posture immediately.

Now every Dockerfile creates and uses a non-root user:

FROM node:18-alpine

# Create app directory

WORKDIR /app

# Create non-root user

RUN addgroup -g 1001 -S nodejs && \

adduser -S nodejs -u 1001

# Copy files with correct ownership

COPY --chown=nodejs:nodejs package*.json ./

RUN npm ci --only=production

COPY --chown=nodejs:nodejs . .

# Switch to non-root user

USER nodejs

EXPOSE 3000

CMD ["node", "index.js"]

The key parts:

-

addgroup and adduser: Creates a system group and user with specific IDs (1001). Using consistent IDs across containers helps with volume permissions.

-

--chown flag: Sets ownership of copied files to our non-root user. Without this, files are owned by root and the nodejs user can't read them.

-

USER directive: Switches to the non-root user for all subsequent commands and the final container runtime.

We also configure our orchestration layer to enforce non-root execution:

# Kubernetes Pod Security Context

securityContext:

runAsNonRoot: true

runAsUser: 1001

runAsGroup: 1001

fsGroup: 1001

allowPrivilegeEscalation: false

capabilities:

drop:

- ALL

readOnlyRootFilesystem: true

This belt-and-suspenders approach ensures that even if someone forgets to add USER to a Dockerfile, Kubernetes will refuse to run the container as root.

Read-Only Filesystems and Volume Management

Another security practice we adopted: read-only root filesystems. Most applications don't need to write to the filesystem except for specific directories like /tmp or log directories.

Here's our pattern:

FROM node:18-alpine

WORKDIR /app

# Create non-root user

RUN addgroup -g 1001 -S nodejs && \

adduser -S nodejs -u 1001

# Create writable directories

RUN mkdir -p /app/logs /app/tmp && \

chown -R nodejs:nodejs /app/logs /app/tmp

COPY --chown=nodejs:nodejs . .

RUN npm ci --only=production

USER nodejs

# Application writes logs here

VOLUME ["/app/logs"]

EXPOSE 3000

CMD ["node", "index.js"]

Then in our Kubernetes deployment:

spec:

containers:

- name: api

image: api:v2.1.0

securityContext:

readOnlyRootFilesystem: true

volumeMounts:

- name: logs

mountPath: /app/logs

- name: tmp

mountPath: /app/tmp

volumes:

- name: logs

emptyDir: {}

- name: tmp

emptyDir: {}

This prevents attackers from modifying application files or installing malicious binaries even if they compromise the application. They can only write to the specific directories we've mounted as volumes.

We discovered this was important when we had a Redis container get compromised through an unpatched vulnerability. The attacker tried to download and execute a cryptocurrency miner. Because we had a read-only filesystem, the download failed. Our monitoring caught the failed attempts, and we patched the vulnerability before any real damage occurred.

Resource Management: The Scaling Nightmare

When we first hit 2M requests per day, our containers started behaving erratically. Some would consume 4GB of memory and get OOM killed. Others would max out CPU and slow to a crawl. We had no resource limits configured, so containers were competing for resources on the same node.

The worst incident happened at 3am on a Saturday. Our Python data processing service had a memory leak. Without resource limits, it consumed all 32GB of RAM on its node, causing the kernel OOM killer to start randomly killing processes. It killed our database proxy, which crashed our entire API layer. We were down for 45 minutes while I scrambled to restart everything.

Setting Realistic Resource Limits

Here's what we learned about resource limits the hard way:

Don't guess—measure first. We spent two weeks monitoring our containers in production with no limits, collecting metrics on actual resource usage. For each service, we tracked:

- P50, P95, and P99 memory usage

- P50, P95, and P99 CPU usage

- Memory usage during startup

- CPU usage during peak load

- Resource usage during background jobs

For our Node.js API, the data looked like this:

Memory Usage:

P50: 180MB

P95: 320MB

P99: 450MB

Startup: 280MB

CPU Usage:

P50: 0.15 cores

P95: 0.8 cores

P99: 1.2 cores

Peak: 1.5 cores

Based on this data, we set resource requests and limits:

resources:

requests:

memory: "512Mi" # Above P99 for normal operation

cpu: "500m" # Above P95 for normal operation

limits:

memory: "1Gi" # 2x requests for headroom

cpu: "2000m" # Allows bursting during spikes

The key insight: requests are what you need, limits are your safety net. Requests guarantee resources. Limits prevent runaway processes from taking down the node.

We set requests based on P95-P99 usage so containers have enough resources under normal load. We set limits at 2x requests to allow for spikes while preventing catastrophic resource exhaustion.

Here's what happened when we applied these limits:

# Before limits - OOM killed during traffic spike

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

api-7d8f9b5c6d-x2k9p 0/1 OOMKilled 5 10m

# After limits - graceful handling

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

api-7d8f9b5c6d-p8m3q 1/1 Running 0 2h

The container stays running, and Kubernetes can make intelligent scheduling decisions based on actual resource usage.

CPU Limits: The Throttling Trap

Here's something that surprised me: CPU limits can actually hurt performance even when you're not hitting the limit. This is because of how the Linux kernel implements CPU quotas.

When you set a CPU limit, the kernel enforces it using CFS (Completely Fair Scheduler) quotas. The quota is checked every 100ms. If your container uses more than its quota in that period, it gets throttled for the rest of the period—even if the CPU is idle.

We discovered this when our API response times suddenly jumped from 50ms to 150ms after adding CPU limits. The containers weren't hitting their limits in aggregate, but they were experiencing micro-throttling.

Here's what the metrics showed:

Container: api-7d8f9b5c6d-p8m3q

CPU Usage: 850m (limit: 2000m)

Throttled Periods: 45,234

Total Periods: 120,000

Throttle Percentage: 37.7%

The container was being throttled 37% of the time despite using less than half its CPU limit. This happened because our API had bursty CPU usage—it would spike to 1.8 cores for 20ms to process a request, get throttled, then sit idle for 80ms.

The solution? We removed CPU limits entirely and only kept CPU requests:

resources:

requests:

memory: "512Mi"

cpu: "500m" # Guarantees scheduling

limits:

memory: "1Gi" # Prevents OOM

# No CPU limit - allows bursting

This is controversial in the Kubernetes community, but it worked for us. Our response times dropped back to 50ms, and we haven't had CPU-related incidents. The key is to monitor CPU usage and set appropriate requests so Kubernetes schedules containers on nodes with sufficient capacity.

Memory Limits and the OOM Killer

Memory limits are different from CPU limits. You absolutely need them because running out of memory crashes your application. But setting them wrong is equally dangerous.

We learned this when our data processing service started getting OOM killed seemingly randomly. The logs showed:

[2024-01-15 14:23:45] Processing batch 1234...

[2024-01-15 14:23:47] Batch complete, processed 50000 records

[2024-01-15 14:23:48] Killed

No error message, no warning—just "Killed". The kernel OOM killer had terminated the process for exceeding its memory limit.

The problem was our limit was too low. We'd set it at 1GB based on average usage, but our batch processing had occasional spikes to 1.2GB when processing large datasets. The OOM killer doesn't care about averages—it kills processes the instant they exceed their limit.

We increased the limit to 2GB and added memory monitoring:

// Add to application code

const v8 = require('v8');

setInterval(() => {

const heapStats =

v8.getHeapStatistics();

const usedHeap = heapStats.used_heap_size / 1024 / 1024;

const totalHeap = heapStats.heap_size_limit / 1024 / 1024;

const usage = (usedHeap / totalHeap) * 100;

console.log(`Memory usage: ${usedHeap.toFixed(2)}MB / ${totalHeap.toFixed(2)}MB (${usage.toFixed(2)}%)`);

if (usage > 85) {

console.warn('WARNING: Memory usage above 85%, consider scaling or investigating memory leak');

}

}, 30000); // Check every 30 seconds

This gave us visibility into memory usage before hitting the limit. We could see the pattern: memory would climb during batch processing, then drop after garbage collection. With the 2GB limit, we had enough headroom for these spikes.

We also added graceful degradation. When memory usage hits 80%, our service stops accepting new batch jobs and finishes processing current ones:

const MAX_MEMORY_THRESHOLD = 0.80;

function checkMemoryBeforeProcessing() {

const heapStats = v8.getHeapStatistics();

const usage = heapStats.used_heap_size / heapStats.heap_size_limit;

if (usage > MAX_MEMORY_THRESHOLD) {

return false; // Don't accept new work

}

return true;

}

async function processBatch(batch) {

if (!checkMemoryBeforeProcessing()) {

console.log('Memory threshold exceeded, deferring batch processing');

return { deferred: true };

}

// Process batch...

}

This prevented OOM kills and gave Kubernetes time to scale up additional pods to handle the load.

Health Checks: Beyond Just "Is It Running?"

Our initial health checks were embarrassingly simple:

HEALTHCHECK CMD curl -f http://localhost:3000/ || exit 1

This checked if the HTTP server responded. That's it. It didn't check if the database connection was alive, if the cache was accessible, or if the service was actually functional.

We discovered this was insufficient when we had a partial outage. Our API containers were "healthy" according to Kubernetes, but they couldn't connect to the database because of a networking issue. Users got 500 errors, but Kubernetes kept routing traffic to the broken pods because they passed health checks.

Liveness vs. Readiness vs. Startup Probes

Kubernetes has three types of probes, and understanding when to use each one saved us from countless outages:

Startup probes: Used during container initialization. Our Node.js API takes 15-20 seconds to start—it needs to connect to the database, warm up caches, and load configuration. During this time, the container is running but not ready to serve traffic.

Readiness probes: Determines if the container should receive traffic. If the readiness probe fails, Kubernetes removes the pod from service endpoints but doesn't restart it.

Liveness probes: Determines if the container is still alive. If it fails, Kubernetes restarts the container.

Here's our production configuration:

apiVersion: v1

kind: Pod

metadata:

name: api

spec:

containers:

- name: api

image: api:v2.1.0

ports:

- containerPort: 3000

# Startup probe - gives app time to initialize

startupProbe:

httpGet:

path: /health/startup

port: 3000

failureThreshold: 30 # 30 failures allowed

periodSeconds: 2 # Check every 2 seconds

# Total startup time: 30 * 2 = 60 seconds

# Liveness probe - restart if unhealthy

livenessProbe:

httpGet:

path: /health/live

port: 3000

initialDelaySeconds: 0 # Startup probe handles initial delay

periodSeconds: 10

timeoutSeconds: 5

failureThreshold: 3 # Restart after 3 failures (30 seconds)

# Readiness probe - remove from service if not ready

readinessProbe:

httpGet:

path: /health/ready

port: 3000

initialDelaySeconds: 0

periodSeconds: 5

timeoutSeconds: 3

failureThreshold: 2 # Remove after 2 failures (10 seconds)

The key is having different endpoints for each probe type. Here's our implementation:

const express = require('express');

const app = express();

// Track service state

let isShuttingDown = false;

let dbConnected = false;

let cacheConnected = false;

// Startup probe - just checks if server is responding

app.get('/health/startup', (req, res) => {

res.status(200).json({ status: 'starting' });

});

// Liveness probe - checks if app is alive (not deadlocked)

app.get('/health/live', (req, res) => {

if (isShuttingDown) {

return res.status(503).json({ status: 'shutting down' });

}

// Simple check - can we respond?

res.status(200).json({ status: 'alive' });

});

// Readiness probe - checks if app can serve traffic

app.get('/health/ready', async (req, res) => {

if (isShuttingDown) {

return res.status(503).json({ status: 'not ready - shutting down' });

}

const checks = {

database: dbConnected,

cache: cacheConnected,

};

// Check database connection

try {

await db.ping();

checks.database = true;

} catch (err) {

checks.database = false;

}

// Check cache connection

try {

await cache.ping();

checks.cache = true;

} catch (err) {

checks.cache = false;

}

const allHealthy = Object.values(checks).every(check => check === true);

if (allHealthy) {

res.status(200).json({ status: 'ready', checks });

} else {

res.status(503).json({ status: 'not ready', checks });

}

});

// Graceful shutdown

process.on('SIGTERM', () => {

console.log('SIGTERM received, starting graceful shutdown');

isShuttingDown = true;

// Give time for readiness probe to remove us from service

setTimeout(() => {

server.close(() => {

console.log('Server closed');

process.exit(0);

});

}, 10000); // 10 second grace period

});

This setup prevents several common issues:

-

Startup probe prevents premature traffic: Kubernetes won't send traffic until the app is fully initialized.

-

Readiness probe handles temporary issues: If the database connection drops, the pod is removed from service but not restarted. When the connection recovers, it automatically rejoins.

-

Liveness probe catches deadlocks: If the app stops responding entirely (deadlock, infinite loop), it gets restarted.

-

Graceful shutdown: When Kubernetes sends SIGTERM, we set

isShuttingDown = true, which makes readiness probes fail. This removes us from service before actually shutting down.

The graceful shutdown is crucial. Without it, Kubernetes would send SIGTERM and immediately remove the pod from service endpoints. In-flight requests would fail. With our implementation, we have a 10-second window where existing requests can complete before the container shuts down.

Logging and Observability: Finding Needles in Container Haystacks

When we had 5 containers, we could SSH into each one and tail logs. At 200+ containers, that approach was impossible. We needed centralized logging and proper observability.

Structured Logging

Our original logs looked like this:

Server started on port 3000

User logged in

Processing order

Order complete

Error: Database connection failed

These logs were useless at scale. Which user logged in? Which order? What caused the database connection to fail? We had no context.

We switched to structured JSON logging:

const winston = require('winston');

const logger = winston.createLogger({

level: process.env.LOG_LEVEL || 'info',

format: winston.format.combine(

winston.format.timestamp(),

winston.format.errors({ stack: true }),

winston.format.json()

),

defaultMeta: {

service: 'api',

version: process.env.APP_VERSION || 'unknown',

environment: process.env.NODE_ENV || 'development',

hostname: process.env.HOSTNAME || 'unknown',

},

transports: [

new winston.transports.Console()

]

});

// Usage

logger.info('User logged in', {

userId: user.id,

email: user.email,

loginMethod: 'oauth',

ipAddress: req.ip,

});

logger.error('Database connection failed', {

error: err.message,

stack: err.stack,

database: 'postgres',

host: dbConfig.host,

attemptNumber: retryCount,

});

Now every log entry is a structured JSON object with consistent fields. We can search, filter, and aggregate logs in our logging platform (we use Grafana Loki).

Example queries we run daily:

# Find all errors in the last hour

{service="api"} | json | level="error" | __timestamp__ > 1h

# Find slow database queries

{service="api"} | json | duration > 1000 | query_type="database"

# Track user activity

{service="api"} | json | userId="12345" | __timestamp__ > 24h

Container Logging Best Practices

One mistake we made: writing logs to files inside containers. This caused two problems:

- Disk space: Logs would fill up the container filesystem, causing the app to crash.

- Loss of logs: When containers were killed, we lost the logs.

The Docker way is to log to stdout/stderr. Docker captures these streams and makes them available through docker logs. Kubernetes does the same with kubectl logs.

We removed all file-based logging:

// BAD - don't do this in containers

const logger = winston.createLogger({

transports: [

new winston.transports.File({ filename: '/var/log/app.log' })

]

});

// GOOD - log to stdout

const logger = winston.createLogger({

transports: [

new winston.transports.Console()

]

});

Our log aggregation system (Fluentd) collects logs from stdout/stderr of all containers and ships them to our centralized logging platform.

Distributed Tracing

With 200+ containers across 15 microservices, debugging issues was like finding a needle in a haystack. A single user request might touch 8 different services. When something went wrong, we had to piece together logs from multiple services to understand what happened.

We implemented distributed tracing with OpenTelemetry:

const { NodeTracerProvider } = require('@opentelemetry/sdk-trace-node');

const { registerInstrumentations } = require('@opentelemetry/instrumentation');

const { HttpInstrumentation } = require('@opentelemetry/instrumentation-http');

const { ExpressInstrumentation } = require('@opentelemetry/instrumentation-express');

// Initialize tracing

const provider = new NodeTracerProvider();

provider.register();

registerInstrumentations({

instrumentations: [

new HttpInstrumentation(),

new ExpressInstrumentation(),

],

});

// Every request now gets a trace ID that propagates across services

app.use((req, res, next) => {

const span = trace.getActiveSpan();

if (span) {

// Add trace ID to logs for correlation

req.log = logger.child({

traceId: span.spanContext().traceId,

spanId: span.spanContext().spanId,

});

}

next();

});

Now when a request fails, we can see the entire path it took through our system:

Trace ID: 7f8a9b2c3d4e5f6a

├─ [api-gateway] POST /orders (120ms)

│ ├─ [auth-service] GET /verify (15ms) ✓

│ ├─ [order-service] POST /create (85ms)

│ │ ├─ [inventory-service] POST /reserve (45ms) ✓

│ │ ├─ [payment-service] POST /charge (250ms) ✗ FAILED

│ │ │ └─ Error: Payment gateway timeout

│ │ └─ [inventory-service] POST /release (20ms) ✓

│ └─ Response: 500 Internal Server Error

This visualization shows exactly where the failure occurred (payment service timeout) and how it cascaded through the system (inventory was released).

Networking: The Container Communication Maze

When we first deployed to Kubernetes, our services couldn't talk to each other reliably. Sometimes requests would timeout. Other times they'd fail with "connection refused." The logs showed nothing obvious.

The problem was DNS resolution and service discovery. We were hardcoding IP addresses and hostnames that worked in development but not in production.

Service Discovery and DNS

In Kubernetes, pods get ephemeral IP addresses that change every time they restart. You can't hardcode IPs. Instead, you use Kubernetes Services, which provide stable DNS names.

We changed from:

// BAD - hardcoded IP

const orderServiceUrl = 'http://10.0.1.45:8080';

// BAD - external hostname

const orderServiceUrl = 'http://order-service.example.com';

To:

// GOOD - Kubernetes service DNS

const orderServiceUrl = process.env.ORDER_SERVICE_URL || 'http://order-service.default.svc.cluster.local:8080';

The DNS name format is: <service-name>.<namespace>.svc.cluster.local

For services in the same namespace, you can use the short form:

const orderServiceUrl = 'http://order-service:8080';

Connection Pooling and Keep-Alive

Another issue we hit: our containers were opening new TCP connections for every request. With 50M requests per day, we were exhausting ephemeral ports and overwhelming our services with connection overhead.

The fix was connection pooling:

const http = require('http');

// Create HTTP agent with connection pooling

const agent = new http.Agent({

keepAlive: true,

keepAliveMsecs: 30000,

maxSockets: 50, // Max connections per host

maxFreeSockets: 10, // Max idle connections to keep

});

// Use agent for all requests

const axios = require('axios');

const client = axios.create({

httpAgent: agent,

timeout: 5000,

});

// Now requests reuse connections

await client.get('http://order-service:8080/orders/123');

This reduced our connection overhead by 90% and improved response times from 80ms to 45ms.

Retry Logic and Circuit Breakers

In a distributed system, services fail. Networks are unreliable. We needed retry logic, but our first implementation made things worse.

We added simple retries:

// BAD - aggressive retries amplify failures

async function callService(url) {

for (let i = 0; i < 5; i++) {

try {

return await axios.get(url);

} catch (err) {

if (i === 4) throw err;

// Immediate retry

}

}

}

When a service went down, every request would retry 5 times immediately. With 1000 requests per second, that's 5000 requests hammering a failing service. We DDoS'd ourselves.

We implemented proper retry logic with exponential backoff and circuit breakers:

const CircuitBreaker = require('opossum');

// Circuit breaker options

const options = {

timeout: 5000, // Request timeout

errorThresholdPercentage: 50, // Open circuit at 50% errors

resetTimeout: 30000, // Try again after 30s

};

// Wrap service call in circuit breaker

const breaker = new CircuitBreaker(async (url) => {

return await axios.get(url, {

timeout: 5000,

// Retry with exponential backoff

retry: {

retries: 3,

retryDelay: (retryCount) => {

return retryCount * 1000; // 1s, 2s, 3s

},

},

});

}, options);

// Handle circuit breaker events

breaker.on('open', () => {

logger.warn('Circuit breaker opened - service is failing');

});

breaker.on('halfOpen', () => {

logger.info('Circuit breaker half-open - trying request');

});

breaker.on('close', () => {

logger.info('Circuit breaker closed - service recovered');

});

// Use circuit breaker

try {

const response = await breaker.fire('http://order-service:8080/orders/123');

} catch (err) {

if (breaker.opened) {

// Circuit is open, fail fast

return { error: 'Service temporarily unavailable' };

}

throw err;

}

This prevented cascading failures. When a service goes down, the circuit breaker opens after 50% error rate. Further requests fail fast without hitting the failing service. After 30 seconds, it tries one request (half-open state). If it succeeds, the circuit closes. If it fails, it stays open for another 30 seconds.

Secrets Management: The Security Wake-Up Call

Our original approach to secrets was... embarrassing. We had this in our Dockerfile:

ENV DATABASE_PASSWORD=super_secret_password_123

Yes, we hardcoded secrets in the Dockerfile. They were in our Git history, our Docker images, and our container registry. When we realized this, we panicked and rotated every credential.

Environment Variables Done Right

We moved secrets to environment variables, but even that has pitfalls. Here's what we learned:

Never put secrets in Dockerfiles or docker-compose files. They end up in image layers and Git history.

Use Kubernetes Secrets or a secret management system. We use Kubernetes Secrets for most credentials:

apiVersion: v1

kind: Secret

metadata:

name: api-secrets

type: Opaque

data:

database-password: c3VwZXJfc2VjcmV0X3Bhc3N3b3JkXzEyMw== # base64 encoded

api-key: YW5vdGhlcl9zZWNyZXRfa2V5XzQ1Ng==

Then inject them as environment variables:

apiVersion: v1

kind: Pod

metadata:

name: api

spec:

containers:

- name: api

image: api:v2.1.0

env:

- name: DATABASE_PASSWORD

valueFrom:

secretKeyRef:

name: api-secrets

key: database-password

- name: API_KEY

valueFrom:

secretKeyRef:

name: api-secrets

key: api-key

For highly sensitive secrets (encryption keys, signing keys), we use HashiCorp Vault with dynamic secret generation.

Secret Rotation

We learned that having secrets is not enough—you need to rotate them. We now rotate all credentials quarterly and have automation to make it painless:

// Application supports hot-reloading of secrets

const fs = require('fs');

let dbPassword = process.env.DATABASE_PASSWORD;

// Watch secret file for changes (Kubernetes mounts secrets as files)

if (process.env.DATABASE_PASSWORD_FILE) {

fs.watch(process.env.DATABASE_PASSWORD_FILE, (eventType) => {

if (eventType === 'change') {

dbPassword = fs.readFileSync(process.env.DATABASE_PASSWORD_FILE, 'utf8').trim();

logger.info('Database password reloaded from file');

// Reconnect with new password

reconnectDatabase();

}

});

}

This allows us to rotate secrets without restarting containers.

Conclusion

Scaling from 100k to 50M requests per day taught us that Docker mastery isn't about knowing every CLI flag or Dockerfile instruction—it's about understanding production realities that only reveal themselves under load.

Key Takeaways

Image optimization is non-negotiable at scale. Multi-stage builds, Alpine base images, and proper layer caching aren't premature optimization—they're the difference between 90-second deployments and 12-minute deployments when you need to scale fast. Our 1.2GB to 180MB reduction wasn't just about saving disk space; it was about survival during traffic spikes.

Security must be built in from day one. Running as non-root, using read-only filesystems, pinning base image versions with SHA digests, and scanning for vulnerabilities aren't optional hardening steps. They're essential practices that prevent the kind of breaches that end companies. That penetration test where the tester got root access because we ran containers as root? That was our wake-up call.

Resource limits require measurement, not guesswork. Setting requests based on P95 usage and limits at 2x requests came from weeks of production monitoring. The CPU throttling issue we discovered—where containers were throttled despite being under their limits—taught us that sometimes the conventional wisdom (always set CPU limits) doesn't apply to every workload.

Observability is the only way to debug distributed systems. Structured logging, distributed tracing, and proper health checks aren't nice-to-haves. When you have 200+ containers across 15 services, they're the only way to understand what's happening. That trace showing the payment gateway timeout cascading through our system? We would have spent days debugging that without distributed tracing.

Networking and service communication patterns matter more than you think. Connection pooling, circuit breakers, and proper retry logic with exponential backoff prevented us from DDoS'ing ourselves during outages. The circuit breaker pattern alone saved us from multiple cascading failures.

Secrets management is not solved by environment variables alone. Hot-reloading credentials, using proper secret management systems, and implementing rotation procedures are essential for security and operational flexibility.

The Real Lesson

The most important lesson from our journey: production is a different world from development. Your containers might work perfectly on your laptop, pass all CI/CD tests, and run fine in staging. But production has traffic spikes, network partitions, resource constraints, security threats, and failure modes you never imagined.

The practices in this article aren't theoretical best practices from documentation. They're solutions to real problems we encountered while scaling from a small startup to handling 50M+ requests daily. Each pattern, each configuration, each line of code came from a production incident, a performance bottleneck, or a security scare.

Start implementing these practices before you hit the wall we hit. Your future self, frantically debugging a production outage at 3am, will thank you.

What's Next

We're still learning. We're currently working on:

- Service mesh implementation for better traffic management

- GitOps workflows for declarative infrastructure

- Cost optimization through better resource utilization

- Advanced autoscaling based on custom metrics

Containerization at scale is a journey, not a destination. The patterns that work at 50M requests per day will need evolution at 500M. But the fundamentals—small images, proper security, realistic resource limits, comprehensive observability—those remain constant.

If you're building containerized applications, I hope our mistakes and solutions help you avoid some of the pain we went through. And if you've scaled beyond where we are now, I'd love to hear what lessons you've learned. This field moves fast, and we're all learning together.

All code examples and configurations in this article are production-tested and currently running in our infrastructure. Your mileage may vary based on your specific requirements, but these patterns have proven reliable for us.

Performance Monitoring and Metrics

After implementing all the practices above, we still needed a way to measure whether our optimizations were actually working. We built a comprehensive metrics pipeline that tracks container performance across our entire infrastructure.

Prometheus and Custom Metrics

We instrument every container with Prometheus metrics. Here's our standard metrics setup:

const promClient = require('prom-client');

// Create a Registry

const register = new promClient.Registry();

// Add default metrics (CPU, memory, etc.)

promClient.collectDefaultMetrics({ register });

// Custom business metrics

const httpRequestDuration = new promClient.Histogram({

name: 'http_request_duration_seconds',

help: 'Duration of HTTP requests in seconds',

labelNames: ['method', 'route', 'status_code'],

buckets: [0.01, 0.05, 0.1, 0.5, 1, 2, 5],

register,

});

const activeConnections = new promClient.Gauge({

name: 'active_connections',

help: 'Number of active connections',

register,

});

const databaseQueryDuration = new promClient.Histogram({

name: 'database_query_duration_seconds',

help: 'Duration of database queries in seconds',

labelNames: ['query_type', 'table'],

buckets: [0.001, 0.01, 0.05, 0.1, 0.5, 1],

register,

});

// Middleware to track request duration

app.use((req, res, next) => {

const start = Date.now();

res.on('finish', () => {

const duration = (Date.now() - start) / 1000;

httpRequestDuration

.labels(req.method, req.route?.path || 'unknown', res.statusCode)

.observe(duration);

});

next();

});

// Expose metrics endpoint

app.get('/metrics', async (req, res) => {

res.set('Content-Type', register.contentType);

res.end(await register.metrics());

});

This gives us detailed visibility into every aspect of our application's performance. We can see:

- Request latency by endpoint and status code

- Database query performance by query type

- Active connection counts

- Memory and CPU usage patterns

- Custom business metrics (orders processed, payments completed, etc.)

Grafana Dashboards

We created standardized Grafana dashboards for every service. Here's the query structure we use:

# P95 response time

histogram_quantile(0.95,

rate(http_request_duration_seconds_bucket[5m])

)

# Error rate

sum(rate(http_request_duration_seconds_count{status_code=~"5.."}[5m]))

/

sum(rate(http_request_duration_seconds_count[5m]))

# Container restarts (indicates OOM kills or crashes)

increase(kube_pod_container_status_restarts_total[1h])

# Memory usage percentage

container_memory_usage_bytes

/

container_spec_memory_limit_bytes * 100

These dashboards are critical during incidents. When something goes wrong, we can immediately see:

- Which service is failing

- What the error rate is

- Whether it's a resource issue (CPU/memory)

- How it's affecting downstream services

Alerting Rules

We learned the hard way that monitoring without alerting is useless. You need to know about problems before customers start complaining. Here are our core alerting rules:

groups:

- name: container_alerts

interval: 30s

rules:

# High error rate

- alert: HighErrorRate

expr: |

sum(rate(http_request_duration_seconds_count{status_code=~"5.."}[5m]))

/

sum(rate(http_request_duration_seconds_count[5m])) > 0.05

for: 5m

labels:

severity: critical

annotations:

summary: "High error rate detected"

description: "Error rate is {{ $value | humanizePercentage }} for {{ $labels.service }}"

# Container restarts

- alert: ContainerRestarts

expr: increase(kube_pod_container_status_restarts_total[15m]) > 3

labels:

severity: warning

annotations:

summary: "Container restarting frequently"

description: "Container {{ $labels.container }} has restarted {{ $value }} times in 15 minutes"

# High memory usage

- alert: HighMemoryUsage

expr: |

(container_memory_usage_bytes / container_spec_memory_limit_bytes) > 0.9

for: 5m

labels:

severity: warning

annotations:

summary: "Container memory usage is high"

description: "Container {{ $labels.container }} is using {{ $value | humanizePercentage }} of memory limit"

# Slow response time

- alert: SlowResponseTime

expr: |

histogram_quantile(0.95,

rate(http_request_duration_seconds_bucket[5m])

) > 1

for: 10m

labels:

severity: warning

annotations:

summary: "Slow response times detected"

description: "P95 response time is {{ $value }}s for {{ $labels.service }}"

These alerts fire in Slack and PagerDuty, giving us immediate notification of issues. The key is tuning the thresholds and duration. We don't want alerts for brief spikes (hence the for: 5m clauses), but we need to catch sustained problems.

Cost Optimization: The Hidden Challenge

Once we had everything running smoothly, we looked at our cloud bill and nearly had a heart attack. We were spending $45,000/month on container infrastructure. For a startup, that was unsustainable.

Right-Sizing Containers

Our first cost optimization was right-sizing containers. Remember those resource requests and limits we set based on monitoring? We revisited them after three months of production data and found we'd been over-provisioning.

For example, our authentication service had these resources:

resources:

requests:

memory: "512Mi"

cpu: "500m"

limits:

memory: "1Gi"

cpu: "2000m"

But actual usage data showed:

Memory: P99 = 280MB

CPU: P99 = 0.3 cores

We were requesting 512MB but only using 280MB at P99. Multiply that by 20 replicas, and we were wasting 4.6GB of memory capacity that could have been used for other pods.

We adjusted to:

resources:

requests:

memory: "320Mi" # P99 + 15% headroom

cpu: "350m" # P99 + 15% headroom

limits:

memory: "640Mi" # 2x requests

cpu: "1000m" # Allow bursting

This change alone saved us $3,200/month by allowing more efficient bin-packing of pods onto nodes.

Spot Instances and Node Pools

The bigger savings came from using spot instances (AWS) / preemptible VMs (GCP) for stateless workloads. Spot instances are spare cloud capacity available at up to 90% discount, with the caveat that they can be terminated with 2 minutes notice.

We created two node pools:

On-demand pool: For stateful services (databases, caches, queue workers) Spot pool: For stateless services (APIs, batch processors)

The key is making your stateless services resilient to node termination:

apiVersion: apps/v1

kind: Deployment

metadata:

name: api

spec:

replicas: 10

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 3 # Can tolerate 3 pods down

maxSurge: 2 # Can add 2 extra during updates

template:

spec:

# Prefer spot instances

nodeSelector:

node-type: spot

# But tolerate on-demand if spot unavailable

tolerations:

- key: spot

operator: Equal

value: "true"

effect: NoSchedule

# Graceful shutdown

terminationGracePeriodSeconds: 30

containers:

- name: api

image: api:v2.1.0

lifecycle:

preStop:

exec:

command: ["/bin/sh", "-c", "sleep 15"]

The preStop hook gives the pod 15 seconds to finish in-flight requests before Kubernetes sends SIGTERM. Combined with our readiness probe that fails when isShuttingDown = true, this ensures graceful handling of spot instance terminations.

This change saved us $18,000/month—a 40% reduction in compute costs. We've had spot instances terminated during production, and our users never noticed because we had enough replicas and proper graceful shutdown.

Horizontal Pod Autoscaling

We were running fixed replica counts (10 pods for API, 5 for workers, etc.) 24/7. But our traffic patterns showed:

- Peak: 12pm-8pm (2M requests/hour)

- Normal: 8pm-12am (800k requests/hour)

- Low: 12am-12pm (200k requests/hour)

We were over-provisioned during low traffic periods. We implemented Horizontal Pod Autoscaling (HPA):

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: api-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: api

minReplicas: 3

maxReplicas: 30

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

- type: Resource

resource:

name: memory

target:

type: Utilization

averageUtilization: 80

behavior:

scaleDown:

stabilizationWindowSeconds: 300 # Wait 5 min before scaling down

policies:

- type: Percent

value: 50 # Scale down max 50% at a time

periodSeconds: 60

scaleUp:

stabilizationWindowSeconds: 0 # Scale up immediately

policies:

- type: Percent

value: 100 # Can double capacity

periodSeconds: 15

- type: Pods

value: 5 # Or add 5 pods

periodSeconds: 15

selectPolicy: Max # Use the higher value

The key settings:

- Scale up aggressively: During traffic spikes, we can double capacity or add 5 pods every 15 seconds

- Scale down conservatively: Wait 5 minutes to ensure traffic has actually decreased, then scale down max 50% at a time

- Min replicas: 3: Always maintain 3 replicas for availability

- Max replicas: 30: Cap to prevent runaway scaling

This reduced our average replica count from 10 to 6.5, saving another $7,800/month while maintaining performance during peak traffic.

Custom Metrics Autoscaling

CPU and memory-based autoscaling works well for most services, but our batch processing service needed custom metrics. It processes jobs from a queue, and the queue depth is a better indicator of scaling needs than CPU usage.

We implemented custom metrics autoscaling:

// Expose queue depth metric

const queueDepth = new promClient.Gauge({

name: 'job_queue_depth',

help: 'Number of jobs waiting in queue',

register,

});

// Update metric every 30 seconds

setInterval(async () => {

const depth = await queue.getDepth();

queueDepth.set(depth);

}, 30000);

Then configured HPA to scale based on this metric:

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: worker-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: worker

minReplicas: 2

maxReplicas: 20

metrics:

- type: Pods

pods:

metric:

name: job_queue_depth

target:

type: AverageValue

averageValue: "100" # Target 100 jobs per pod

Now when the queue backs up, we automatically scale workers to handle the load. When the queue is empty, we scale down to minimum replicas. This saved another $4,000/month by not running idle workers.

Disaster Recovery and Backup Strategies

Six months into production, we had a nightmare scenario: our primary database cluster failed due to a storage issue. We had backups, but we'd never actually tested restoring them. Turns out, our backup process was broken for three weeks, and we didn't know.

We lost 2 hours of data and spent 6 hours restoring from the last good backup. It was a wake-up call about disaster recovery.

Backup Verification

We now have automated backup verification:

#!/bin/bash

# backup-verify.sh - Runs daily

# Take backup

pg_dump -h $DB_HOST -U $DB_USER -d $DB_NAME > /backups/backup-$(date +%Y%m%d).sql

# Verify backup by restoring to test database

psql -h $TEST_DB_HOST -U $DB_USER -d test_restore < /backups/backup-$(date +%Y%m%d).sql

# Run smoke tests against restored database

psql -h $TEST_DB_HOST -U $DB_USER -d test_restore -c "SELECT COUNT(*) FROM users;"

psql -h $TEST_DB_HOST -U $DB_USER -d test_restore -c "SELECT COUNT(*) FROM orders;"

# If tests pass, upload to S3

if [ $? -eq 0 ]; then

aws s3 cp /backups/backup-$(date +%Y%m%d).sql s3://our-backups/

echo "Backup verified and uploaded successfully"

else

echo "Backup verification failed!" | mail -s "BACKUP FAILURE" ops@company.com

exit 1

fi

# Cleanup old backups (keep 30 days)

find /backups -name "backup-*.sql" -mtime +30 -delete

This script runs daily and alerts us if backups fail. We've caught several issues this way before they became disasters.

Multi-Region Deployments

After the database incident, we also implemented multi-region deployments for critical services. Our architecture now spans three AWS regions:

- Primary: us-east-1 (handles 80% of traffic)

- Secondary: us-west-2 (handles 15% of traffic)

- DR: eu-west-1 (standby, handles 5% of traffic)

Each region runs a complete copy of our application stack. We use Route53 with health checks to route traffic:

# Route53 configuration (simplified)

- record: api.company.com

type: A

routing: latency

health_check: true

regions:

- region: us-east-1

weight: 80

health_check_path: /health/ready

- region: us-west-2

weight: 15

health_check_path: /health/ready

- region: eu-west-1

weight: 5

health_check_path: /health/ready

If a region fails health checks, Route53 automatically routes traffic to healthy regions. We've tested this by deliberately taking down us-east-1, and traffic seamlessly shifted to us-west-2 with no customer impact.

The cost of multi-region deployment is significant ($15,000/month additional), but for a production system handling 50M requests daily, it's insurance we can't afford to skip.

CI/CD Pipeline Optimization

Our original CI/CD pipeline was slow and unreliable. A simple code change took 45 minutes to deploy to production. Here's how we optimized it:

Parallel Builds

We parallelized our Docker builds:

# .github/workflows/deploy.yml

name: Deploy

on:

push:

branches: [main]

jobs:

build:

runs-on: ubuntu-latest

strategy:

matrix:

service: [api, worker, auth, notifications]

steps:

- uses: actions/checkout@v2

- name: Set up Docker Buildx

uses: docker/setup-buildx-action@v1

- name: Cache Docker layers

uses: actions/cache@v2

with:

path: /tmp/.buildx-cache

key: ${{ runner.os }}-buildx-${{ matrix.service }}-${{ github.sha }}

restore-keys: |

${{ runner.os }}-buildx-${{ matrix.service }}-

- name: Build and push

uses: docker/build-push-action@v2

with:

context: ./services/${{ matrix.service }}

push: true

tags: registry.company.com/${{ matrix.service }}:${{ github.sha }}

cache-from: type=local,src=/tmp/.buildx-cache

cache-to: type=local,dest=/tmp/.buildx-cache-new,mode=max

- name: Move cache

run: |

rm -rf /tmp/.buildx-cache

mv /tmp/.buildx-cache-new /tmp/.buildx-cache

This builds all four services in parallel instead of sequentially, reducing build time from 25 minutes to 7 minutes.

Canary Deployments

We also implemented canary deployments to catch issues before they affect all users:

# Canary deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: api-canary

spec:

replicas: 2 # 10% of production traffic

template:

metadata:

labels:

app: api

version: canary

spec:

containers:

- name: api

image: api:$NEW_VERSION

---

# Production deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: api-production

spec:

replicas: 18 # 90% of production traffic

template:

metadata:

labels:

app: api

version: production

spec:

containers:

- name: api

image: api:$CURRENT_VERSION

Our deployment process:

- Deploy new version to canary (2 pods, 10% traffic)

- Monitor error rates, response times, and custom metrics for 15 minutes

- If metrics are healthy, gradually roll out to production

- If metrics degrade, automatically rollback canary

This caught a critical bug last month that only manifested under production load. The canary deployment caught it affecting 10% of users, and we rolled back before it impacted everyone.

Final Thoughts on Container Orchestration

After a year of running containers at scale, here's what I wish I'd known from the start:

Start with the end in mind: Design for production from day one. Don't build on latest tags and hardcoded configs thinking you'll fix it later. You won't have time when you're firefighting production issues.

Invest in observability early: Structured logging, metrics, and tracing aren't overhead—they're essential tools. We spent more time adding them after incidents than we would have spent building them in from the start.

Automate everything: Manual deployments don't scale. Manual backup verification doesn't scale. Manual security patching doesn't scale. If you're doing it more than once, automate it.

Test failure scenarios: Don't wait for production to discover how your system handles failures. Kill pods, terminate nodes, overload services, and see what breaks. Fix it before customers find it.

Cost optimization is ongoing: Our infrastructure costs went from $45k/month to $28k/month through right-sizing, spot instances, and autoscaling. Review your costs monthly and optimize continuously.

Conclusion

Scaling from 100k to 50M requests per day with Docker taught us lessons that no tutorial or documentation could have prepared us for. The journey was painful—we had outages, lost data, burned money on over-provisioned infrastructure, and made every rookie mistake in the book.

But we emerged with a production-grade containerization strategy that's resilient, secure, cost-effective, and truly scalable. The practices in this article represent thousands of hours of debugging, optimization, and incident response distilled into actionable patterns.

Key Takeaways

Image optimization is fundamental: Multi-stage builds, Alpine base images, and layer caching reduced our images from 1.2GB to 180MB. This wasn't about perfectionism—it was about being able to scale from 10 to 30 pods in under 90 seconds during traffic spikes instead of waiting 12 minutes.

Security cannot be an afterthought: Running as non-root, using read-only filesystems, pinning image digests, and implementing proper secrets management prevented breaches that could have ended our company. The penetration test that found 47 vulnerabilities was our wake-up call.

Resource management requires data, not guesses: Two weeks of production monitoring to understand P50/P95/P99 usage patterns, followed by setting requests at P95 and limits at 2x requests, eliminated OOM kills and resource contention. The CPU throttling issue we discovered taught us that dogmatic adherence to best practices can hurt performance.

Observability is non-negotiable at scale: Structured JSON logging, distributed tracing with OpenTelemetry, and comprehensive Prometheus metrics transformed debugging from "grep through logs for hours" to "see the exact failure path in 30 seconds." Without this, managing 200+ containers would be impossible.

Cost optimization is a continuous process: Right-sizing containers, using spot instances for stateless workloads, and implementing intelligent autoscaling reduced our monthly infrastructure costs from $45k to $28k—a 38% reduction—while improving performance and reliability.

Disaster recovery must be tested: Our database failure taught us that untested backups are worthless. Automated backup verification, multi-region deployments, and regular disaster recovery drills are now non-negotiable parts of our operations.

CI/CD pipeline efficiency compounds: Reducing deployment time from 45 minutes to 12 minutes through parallel builds, layer caching, and canary deployments means we can ship fixes faster and iterate more quickly. The canary deployment that caught a critical bug before full rollout paid for itself immediately.

What We'd Do Differently

If we could start over, we would:

- Implement observability on day one: Don't wait until you have a production incident to add structured logging and metrics

- Use multi-stage builds from the start: The effort to refactor Dockerfiles later was significant

- Set up proper secrets management immediately: Rotating credentials after accidentally committing them was painful

- Design for failure from the beginning: Graceful shutdown, circuit breakers, and retry logic should be built in, not bolted on

- Automate cost monitoring: We burned money for months before realizing we were over-provisioned

The Path Forward

We're not done learning. Our roadmap includes:

- Service mesh adoption (Istio or Linkerd) for better traffic management, observability, and security

- GitOps workflows (ArgoCD) for declarative infrastructure management

- Advanced autoscaling based on custom business metrics, not just CPU/memory

- Chaos engineering to proactively discover failure modes

- FinOps practices for even better cost optimization

Container orchestration at scale is a journey of continuous improvement. The patterns that work at 50M requests will need evolution at 500M. But the fundamentals—small secure images, proper resource management, comprehensive observability, and resilient architecture—remain constant.

A Final Note

Every practice in this article came from a real production incident, performance problem, or security scare. The 180MB image size wasn't arbitrary—it was the result of iterating until deployments were fast enough during traffic spikes. The circuit breaker configuration wasn't from a tutorial—it was tuned after we DDoS'd ourselves with retry storms. The backup verification script exists because we lost 2 hours of data when backups were silently broken.

Production is an unforgiving teacher. These lessons cost us outages, lost revenue, and many sleepless nights. But they transformed us from developers who could "get containers running" into engineers who could build and operate production-grade containerized systems at scale.

If you're earlier in your containerization journey, I hope our experiences help you avoid some of the pain we went through. And if you've scaled beyond where we are, I'd love to learn from your experiences. We're all figuring this out together, one incident and one optimization at a time.

The infrastructure that handles our 50M daily requests today looks nothing like what we started with. It's more secure, more efficient, more observable, and more resilient. But most importantly, it's built on lessons learned from real-world production experience—the only teacher that truly matters.

All practices, configurations, and code examples in this article are battle-tested in production. We've been running these patterns for 8+ months across 200+ containers handling 50M+ requests daily. Your specific requirements may differ, but these fundamentals apply to any containerized system at scale.

Never Miss an Article

Get our best content delivered to your inbox weekly. No spam, unsubscribe anytime.

Comments (0)

Please log in to leave a comment.

Log InRelated Articles

Optimizing Quantum Circuit Synthesis with Qiskit 0.39 and Cirq 1.2: A Comparative Analysis of Techniques for Quantum Machine Learning

Feb 28, 2026

Code Review and Testing: What We Learned Scaling to 50M Requests/Day

Apr 21, 2026

A Comprehensive Comparison of Cloud Providers: AWS, Azure, Google Cloud

Mar 26, 2026